Case Study: Marketplace MVP Built in 12 Weeks

This marketplace MVP case study breaks down how a two-sided product was scoped, what was intentionally manual, and how the first release was kept commercially useful.

Key Takeaways

- 01

Fast launches usually come from scope discipline, not feature volume.

- 02

Manual workflows can be a rational choice in version one.

- 03

Good case studies reveal what was deliberately excluded.

- 04

Predictable demos and quick founder decisions keep projects moving.

- 05

A useful launch creates the right next questions, not a false sense of completion.

Case Study: Marketplace MVP Built in 12 Weeks matters because buyers and founders need a clear answer, not a vague range or a stack of agency buzzwords. This guide explains marketplace mvp case study in a commercially realistic way so you can make better product, budget, and delivery decisions.

The short version: good launches rarely come from doing more. They come from sequencing the right work, cutting speculative ideas, and keeping founder decisions close to the delivery team.

Quick answer

marketplace mvp case study should be evaluated through scope, delivery risk, and business usefulness, not just a headline number or trend-driven opinion.

- The most important work is usually deciding what not to build in version one.

- Manual steps are acceptable when they reduce product risk.

- Fast launches come from sequencing and clarity, not feature volume.

Who this guide is for

This guide is for founders who want a realistic picture of how MVP delivery works when scope is treated seriously and the first release is designed to learn quickly.

What made the delivery timeline credible

The timeline worked because the team kept requirements visible and made founder decisions quickly. In most case studies like this, the biggest accelerant is not heroic engineering. It is sharp scoping, predictable review cycles, and ruthless exclusion of low-value requests.

That is also why the first release looked smaller than the full vision. Version one was built to prove traction, not to satisfy every long-term roadmap idea.

| Marketplace area | Initial approach | Reason |

|---|---|---|

| Supply onboarding | Manual approval | Quality mattered more than automation |

| Matching | Semi-manual logic | Needed learning before full automation |

| Payments | Simple transaction flow | Reduced legal and ops complexity in v1 |

Two-sided marketplaces are complex: buyers, sellers, matching, payments, trust. This case study shows how we built one in 12 weeks with a lean scope.

The Problem

Client: Industry veteran, first-time tech founder. Knew the domain; needed a technical partner.

Idea: Connect service providers with local businesses. Think "Uber for B2B services" in a niche vertical.

Challenge: Prove demand before building a full platform. Budget: ~$55K. Timeline: 12 weeks to soft launch.

The Approach

Phase 1: Discovery (1.5 weeks)

We mapped the core flows: provider onboarding, request creation, matching, and payment. Identified the minimum: providers could list services; buyers could request and pay. No complex matching algorithm in v1—manual matching was acceptable for validation.

Phase 2: Build (9 weeks)

Team: 2 full-stack developers. Stack: Next.js, Supabase, Stripe Connect.

Key decisions:

- Stripe Connect for split payments (platform + provider)

- Simple provider verification (manual approval, not automated)

- Email-based notifications (no in-app messaging in v1)

- No mobile app—responsive web only

Phase 3: Launch (1.5 weeks)

Soft launch with 15 providers and 30 buyers from the founder's network. Manual onboarding for first 50 users to catch edge cases.

Trade-offs

- No in-app messaging: Users used email/phone. Added in v2.

- Manual matching: Founder matched requests to providers initially. Algorithm came later.

- Simple search: Category + location. No advanced filters.

The Outcome

Launch: 12 weeks from kickoff. First transaction in week 13.

6 months post-launch: 80 providers, 200+ buyers, $15K GMV/month. Founder raised seed to build v2 with matching and mobile.

Key lesson: Manual processes are fine for validation. Automate when you have proof.

Conclusion

Marketplaces can be validated with lean builds. Focus on the core loop (request → match → pay), defer complexity, and use manual processes until you have traction.

Why this approach worked

The delivery approach worked because the team made clear tradeoffs: manual where learning mattered, automated where repeatability mattered, and intentionally unfinished where extra polish would not change adoption.

Common case-study misread

Readers often assume the speed came from coding faster. In most real launches, speed came from cutting the right work, reviewing early, and refusing to hide uncertainty until the end.

What to copy from this case

- Define the first value loop clearly.

- Use demos to test assumptions early.

- Keep manual operations where they protect learning.

- Document exclusions so scope stays honest.

- Launch with a plan for the next iteration.

If you are planning something similar, explore how to build a startup MVP, build vs buy vs partner, and our product development process.

What to do next

Use this case study as a reminder that credible speed comes from scope discipline, not maximum ambition. Map the first value loop, keep manual work where it buys learning, and launch with a clear next iteration in mind. Our product development process and software consulting support can help if you are planning a similar build.

Apply this in a real project

If you’re planning to build or improve software based on these ideas, our custom software development services can help you define scope, reduce delivery risk, and ship maintainable systems.

For founder-led execution, explore our product development services and software consulting services to turn requirements into a working release with clear ownership.

Expert Insights

Speed came from subtraction

In most credible launches, what the team removed from scope mattered as much as what they built.

Founder responsiveness shapes delivery pace

Quick decisions on tradeoffs, copy, priorities, and workflow details often save more time than any individual engineering tactic.

Reader Rating

Based on 1 reviews

What Readers Say

"Manual matching felt wrong at first, but it let us validate fast. We automated in v2 with real data."

Founder, Marketplace

Industry veteran

Frequently Asked Questions

Why do some MVPs launch faster than others?+

What is the biggest takeaway from a strong case study?+

Should founders copy every detail from a case study?+

Why are manual steps acceptable early?+

What should happen immediately after launch?+

Reader Questions

How much of this timeline depends on a strong team?

A lot, but team quality alone is not enough. Clear scope and quick decisions are just as important.

Can a non-technical founder manage a case like this?

Yes, if the product goal is clear and the delivery partner makes tradeoffs, risks, and progress easy to understand.

What if the first launch underperforms?

That is still useful if the product was scoped to learn. The data should guide what changes next instead of turning the launch into a sunk-cost problem.

Related Articles

Technology • 3 min

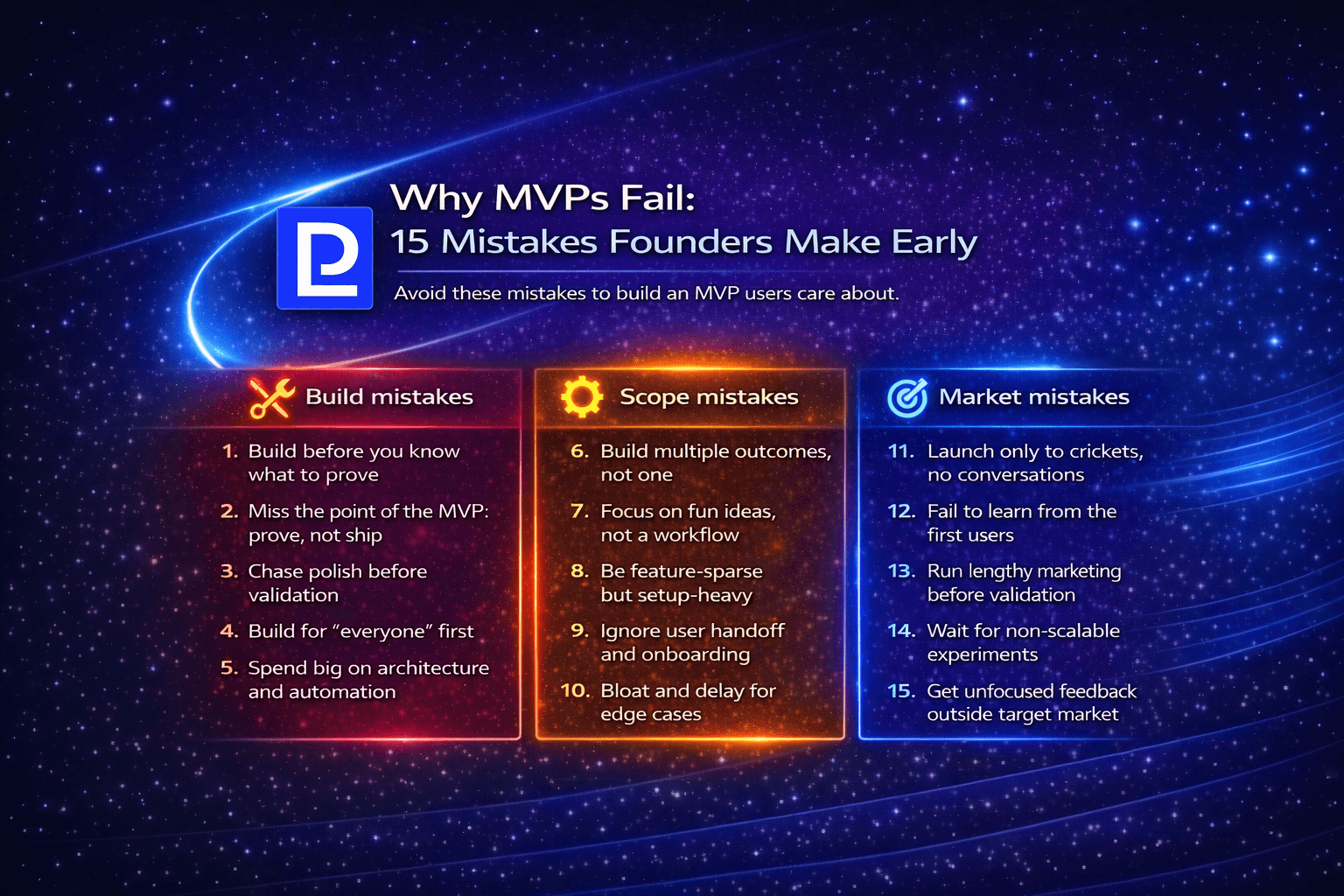

Why MVPs Fail: 15 Mistakes Founders Make Early

A founder-focused guide to the 15 most common MVP mistakes: what causes failure, how to avoid them, and how to build MVPs that actually validate.

Technology • 3 min

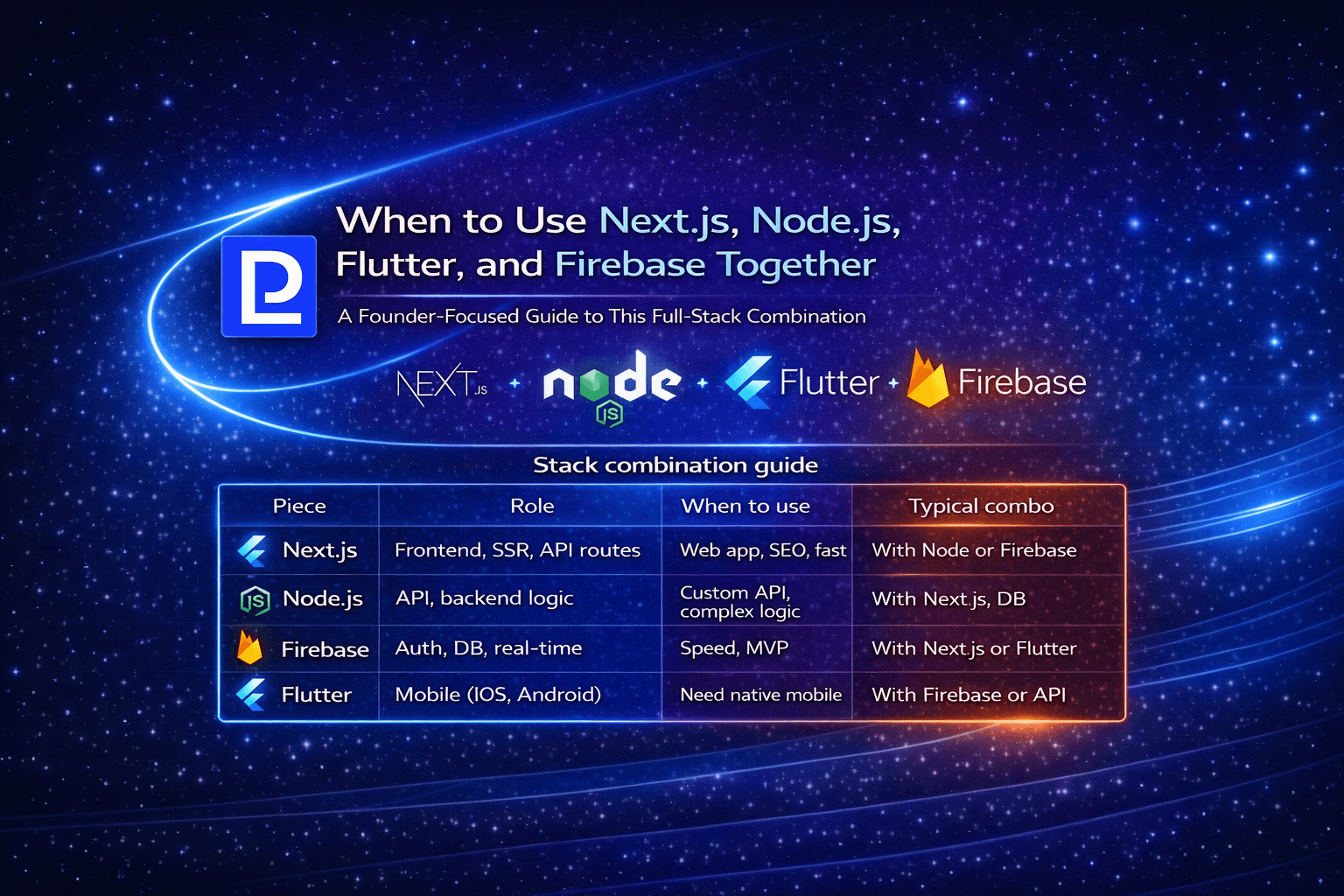

When to Use Next.js, Node.js, Flutter, and Firebase Together

A founder-focused guide to combining Next.js, Node.js, Flutter, and Firebase: when the stack makes sense and how to use them together.

Technology • 3 min

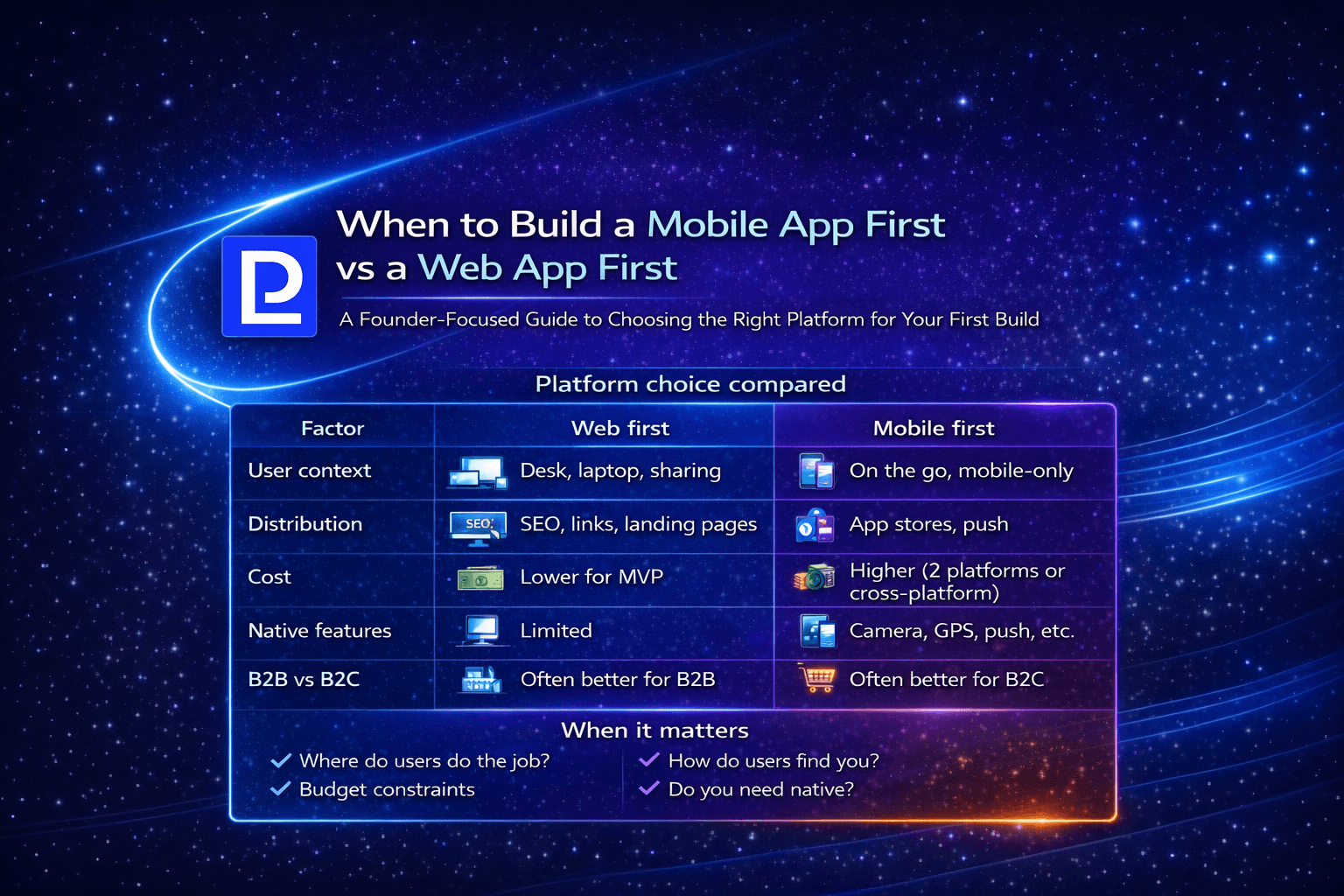

When to Build a Mobile App First vs a Web App First

A founder-focused guide to choosing mobile-first vs web-first: when each makes sense, tradeoffs, and how to decide for your product.